Abstract

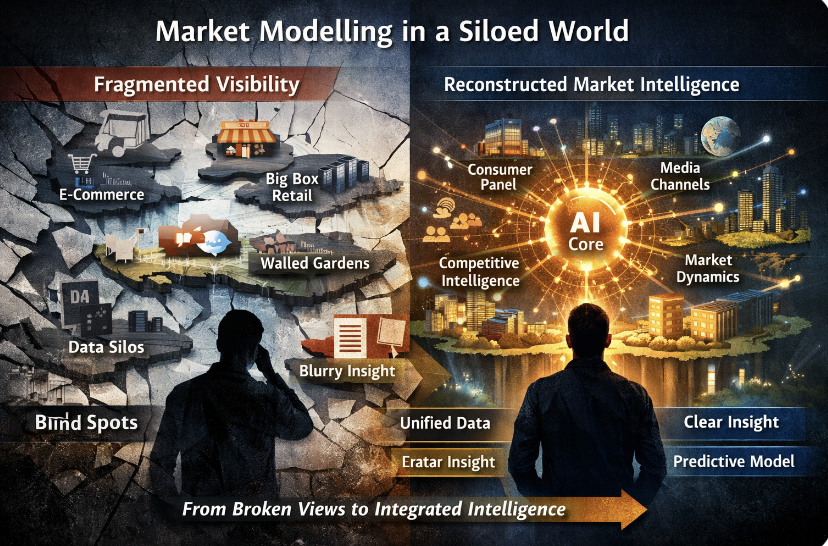

In the age of Big-Box retail, e-commerce, and algorithmically mediated digital media, the foundational visibility that once underpinned market modelling has eroded. Brands no longer share a common view of category size, competitive messaging, or share of voice. Instead, they operate within fragmented, opaque ecosystems where consumer reality itself is personalised and non-overlapping. Traditional research frameworks—built on stable segmentation and sampling—are increasingly misaligned with this fluid, micro-segmented world.

This post argues that we are witnessing an epistemic collapse of marketing models. Yet, within this disruption lies an opportunity. AI, when extended beyond internal optimisation to encompass market-wide modelling, can help reconstruct a coherent view of the competitive landscape. The future may belong not to agencies or platforms as we know them, but to new intelligence layers that simulate markets in real time—turning uncertainty into strategic foresight.

Before the era of Big-Box retail and e-commerce, brands operated with a reasonable degree of epistemic comfort. Sampling-based retail audits, though imperfect, offered a shared and broadly accepted view of category size and market share. In parallel, the era of mass media ensured that advertising itself was a visible commons. Every brand could see what every other brand was saying. Share of voice was measurable, comparable, and strategically actionable.

That world has quietly dissolved.

The rise of Big-Box retail and e-commerce did not merely add new channels—it punched structural holes into the measurement architecture of markets. Retail audits, designed for fragmented physical retail, could not fully capture these new nodes of concentrated commerce. Brands compensated, imperfectly, through consumer panels and surveys.

Then came digital media—and with it, a far more profound rupture.

Unlike mass media, digital advertising is not a commons. It is a series of walled gardens governed by opaque algorithms across platforms such as Google, Meta, Amazon and Netflix. Messaging is no longer broadcast; it is selectively revealed. Two consumers in the same household may inhabit entirely different advertising realities.

The consequence is not merely fragmentation—it is invisibility.

A brand today has, at best, a partial view of its competitive environment. It cannot reliably know which messages its competitors are deploying, to whom, how often, or with what effect. Share of voice, once a foundational strategic metric, becomes probabilistic at best, illusory at worst.

We are, in effect, witnessing the epistemic collapse of marketing.

And when visibility collapses, models follow.

For decades, market research rested on a deceptively simple assumption: that populations could be segmented into reasonably homogeneous groups, and that a small sample—25 to 30 respondents per segment—could yield statistically robust insights. This assumption held when demographic and socio-economic variables were reasonable proxies for behaviour.

They no longer are.

The information age, accelerated by social media, has produced not segments but shards—micro-cohorts defined by fluid identities, shifting affiliations, and algorithmically mediated experiences. Two consumers of the same age, income, and geography may exhibit entirely divergent consumption patterns.

The result is model fragility.

Traditional sampling frameworks, built on stable segmentation, are increasingly mis-specified. They measure a world that no longer exists. Unsurprisingly, both the advertising and market research industries find their remit shrinking—ceded, in part, to the very platforms that have rendered them blind.

As George Box famously observed, “All models are wrong, but some are useful.” The problem we now face is more acute: our models are becoming less useful precisely when we need them most.

And ss Nassim Nicholas Taleb reminds us in The Black Swan, “We tend to fool ourselves into believing we understand the past, which implies that the future is knowable.” In today’s fragmented, algorithmically mediated markets, that illusion is becoming increasingly untenable.

Enter AI—not as a tool of incremental optimisation, but as a force of structural reconstitution.

The age of AI will deeply impact all sectors of the economy, including consumer marketing. In the medium term, over a decade or two, it could result in marketing becoming a discipline in which AI avatars of brands market to AI avatars of consumers. Over a longer horizon, say two to three decades, an age of abundance driven by sky-high productivity gains could change the very relationship between products and services, with basic products and services available to all for free.

In the near term, AI is already reshaping marketing operations. Brands are deploying agentic systems atop internal data lakes to drive efficiency, responsiveness, and real-time decision-making. Large Language Models are internalising routine communication tasks—copy generation, customer response, and even elements of campaign orchestration.

But this is only half the story.

These internal systems, powerful as they are, remain inward-looking. They optimise within the boundaries of what the brand already knows. What is missing is a coherent, continuously updated model of the market as a whole—one that captures not just the brand’s data but also the dynamic interplay among consumers, competitors, channels, and context.

This is the gap. And it is a large one

The opportunity, therefore, is not for another advertising agency or research firm in its current form. It is for a new kind of external intelligence layer—one that plugs into a brand’s internal AI systems and extends them outward into the market.

Such a system would rest on three foundational pillars.

First, a continuously evolving, large-scale consumer panel—not static, but dynamically resized and reweighted to reflect the fluidity of real-world behaviour.

Second, a single-source, tech-forward measurement architecture capable of capturing exposure across both mass and digital media—across channels, categories, brands, and messages—reconstructing, as far as possible, the lost visibility of the competitive landscape.

Third, a predictive, dynamic model of the market that integrates consumer behaviour, competitive activity, and exogenous factors—economic, technological, regulatory, and geopolitical—to simulate and evaluate alternative strategies before they are deployed.

In essence, this is not research. It is not advertising. It is not a consultancy.

It is market simulation as a service.

The economics of such an entity will be fundamentally different. It will require significant upfront investment—in data infrastructure, AI models, and proprietary measurement systems. It will incur ongoing costs of data generation and model training. But its value proposition will be equally fundamental: the ability to enhance a client’s internal intelligence system by an order of magnitude.

Pricing will inevitably follow outcomes.

Incumbents could, in theory, pivot. A holding company like Omnicom Group, a measurement giant like Nielsen, a consultancy like McKinsey & Company, a SaaS platform such as Salesforce, or an IT major like Infosys all possess pieces of the puzzle. Even AI-native firms such as OpenAI or Anthropic could extend in this direction.

But incumbency is often a handicap when the paradigm itself is shifting.

My instinct is that this space will not be claimed by those defending legacy revenue streams, but by those unburdened by them.

Which is why, if one were to place a bet today, it might be on a start-up—still in stealth—quietly assembling the pieces of a single-source, tech-forward, low-compliance measurement and modelling system.

Not measuring the market as it was.

But reconstructing it as it is becoming. And such an entity will also be at the cutting edge when the age of AI to AI marketing and later the age of abundance marketing dawns.

Suggested Reading List:

- The Black Swan – Nassim Nicholas Taleb: On the limits of predictability and why models fail in complex non-linear systems.

- Superforecasting: The Art and Science of Prediction- Philip E. Tetlock & Dan Gardner: A rigorous exploration of probabilistic thinking in uncertain environments

- Seeing Like a State – James C. Scott: A powerful critique of top-down models that oversimplify complex human systems

- The Age of Surveillance Capitalism- Shoshana Zuboff – On how digital platforms create asymmetries of information and control.

- Prediction Machines- Ajay Agrawal, Joshua Gans & Avi Goldfarb: Frames AI as a tool that lowers the cost of prediction

- Competing in the Age if AI- Marco Iansiti & Karim R. Lakhani: On AI-native operating models and the emergence of intelligent enterprises.

- The Signal and the Noise – Nate Silver: Distinguishes between meaningful data and overwhelming noise in complex systems.